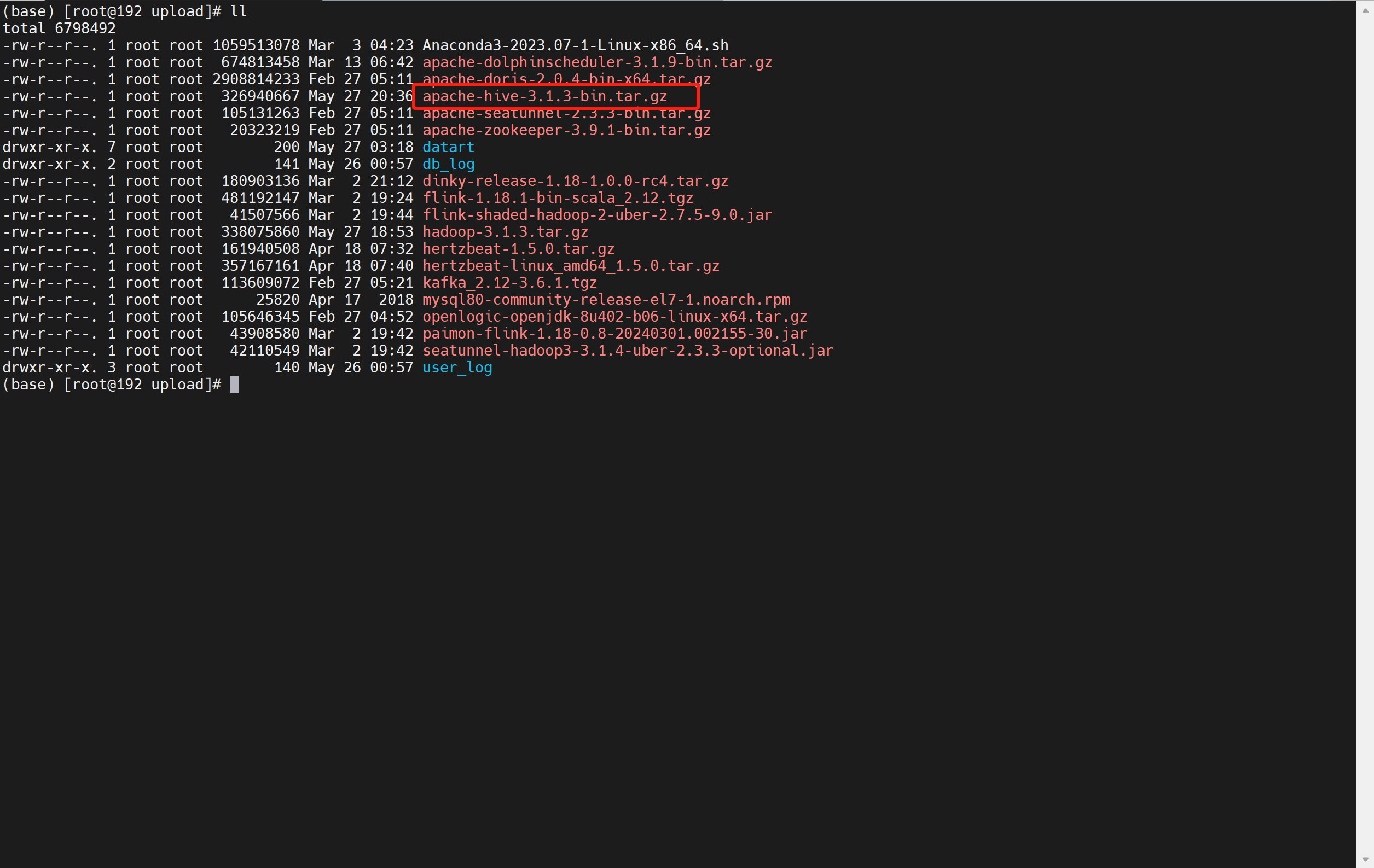

1. 上传hadoop到/opt/upload

2. 解压hadoop-3.1.3.tar.gz

tar -zxvf /opt/upload/hadoop-3.1.3.tar.gz /opt/software

3. 修改hadoop-env.sh

vim /opt/software/hadoop-3.1.3/etc/hadoop/hadoop-env.sh

添加以下内容:

export JAVA_HOME=/opt/software/openjdk8

4. 修改 hdfs-site.xml

vim /opt/software/hadoop-3.1.3/etc/hadoop/hdfs-site.xml

添加以下内容

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/opt/software/hadoop-3.1.3/tmp/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/opt/software/hadoop-3.1.3/tmp/dfs/data</value>

</property>5. 修改 core-site.xml

vim /opt/software/hadoop-3.1.3/etc/hadoop/core-site.xml

添加以下内容:

<property>

<name>hadoop.tmp.dir</name>

<value>file:/opt/software/hadoop-3.1.3/tmp</value>

<description>Abase for other temporary directories.</description>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://192.168.244.129:9000</value>

</property>6. 修改 workers

vim /opt/software/hadoop-3.1.3/etc/hadoop/workers

添加以下内容:

192.168.244.129

7. 修改环境变量

vim /etc/profile

添加以下内容

export HADOOP_HOME=/opt/software/hadoop-3.1.3

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

export HDFS_NAMENODE_USER=root

export HDFS_DATANODE_USER=root

export HDFS_SECONDARYNAMENODE_USER=root

export YARN_RESOURCEMANAGER_USER=root

export YARN_NODEMANAGER_USER=root刷新配置

source /etc/profile

8. 初始化hadoop

hdfs namenode -format

9. 启动hdfs

cd /opt/software/hadoop-3.1.3/sbin

./start-dfs.sh

出现以下三个进程就是成功状态

NameNode

DataNode

SecondaryNameNode